AI Agents in Business: What Agentic AI Actually Means

The word "agent" has taken over every AI conversation in 2026. Vendors use it. Consultants use it. Your CTO probably used it in the last board deck. The problem is that most people using the word mean different things by it, and some of them mean nothing at all.

I want to cut through that. We build software for businesses in Perth and across Australia, and over the last twelve months we have gone from experimenting with AI agents to deploying them in production for real clients. This is what we have learned.

What an AI agent actually is

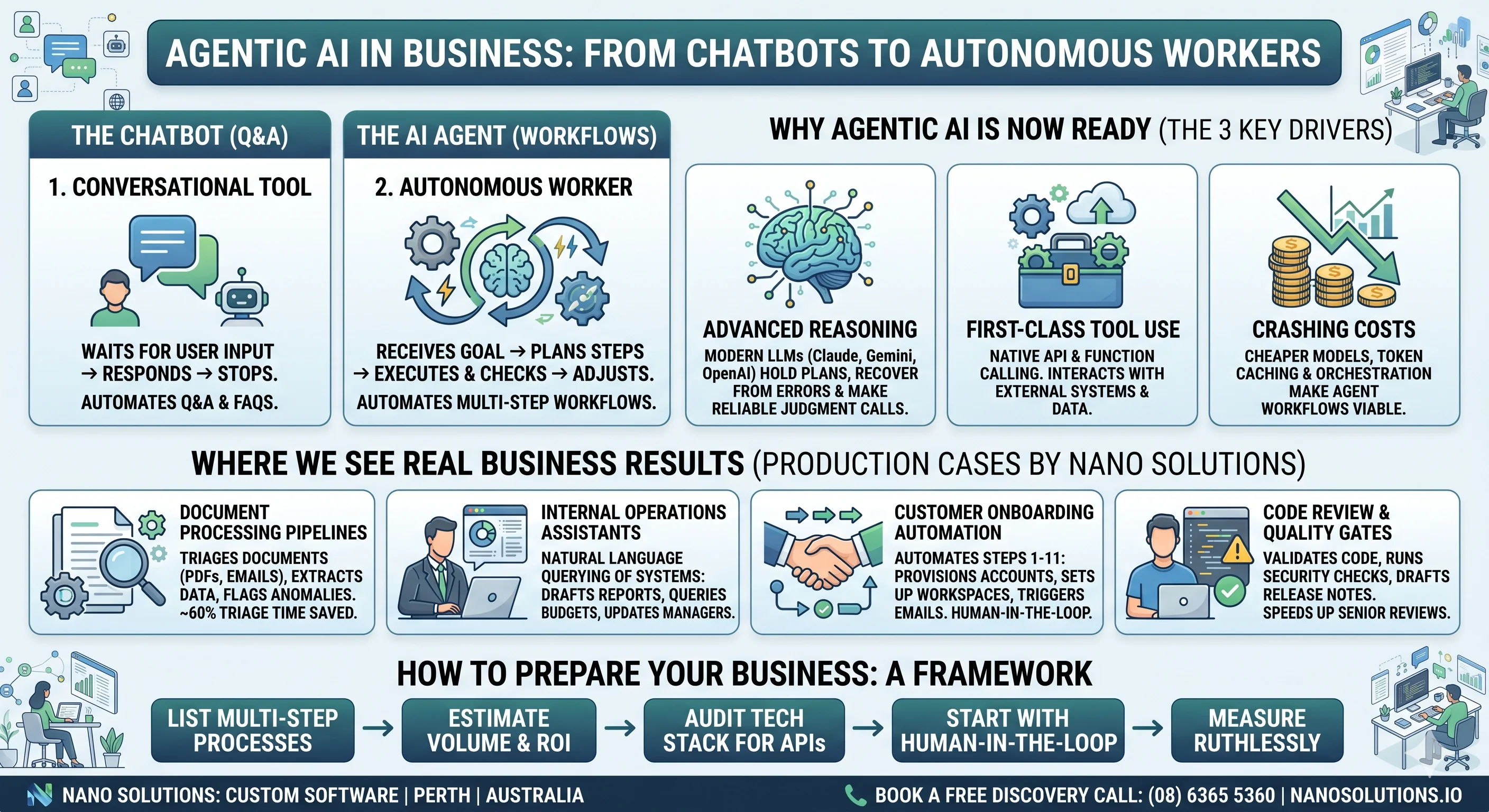

A chatbot waits for you to type something, responds, and stops. An agent does not stop. An agent receives a goal, breaks it into steps, picks the right tools, executes, checks the result, and adjusts. It loops. It reasons about what to do next. It can call APIs, read databases, send emails, update spreadsheets — whatever you wire it up to.

The difference is autonomy. A chatbot is a conversation. An agent is a worker.

That distinction matters because it changes what you can automate. With a chatbot you automate Q&A. With an agent you automate workflows — the kind of multi-step, cross-system processes that currently require a person alt-tabbing between six browser tabs.

Why now

Three things converged to make agentic AI practical for normal businesses, not just Silicon Valley labs:

-

Models got good enough at reasoning. The jump from GPT-3.5 to GPT-4 was significant, but the jump from there to the current generation — Claude, Gemini, the latest OpenAI models — is what made agents viable. These models can hold a plan in their head, recover from errors, and make judgment calls that are right often enough to be useful.

-

Tool use became a first-class feature. Modern LLMs do not just generate text. They can call functions, use APIs, and interact with external systems through structured tool calling. This is the mechanical backbone of every agent framework.

-

Cost dropped far enough. Running an agent means making many LLM calls per task — sometimes dozens. A year ago that would have been prohibitively expensive for most business processes. Today, with cheaper models, prompt caching, and smarter orchestration, the economics work for a surprisingly wide range of tasks.

Where we are seeing real results

Here are concrete patterns we have deployed or are actively building for clients. No hypotheticals.

Document processing pipelines

An insurance company receives hundreds of claim documents a week in different formats — PDFs, scanned images, emails with attachments. Previously, a team of three people triaged, extracted key fields, cross-referenced policy numbers, and routed claims to the right department. An agent now does the first pass: it reads the document, extracts structured data, validates it against the policy database, flags anomalies, and routes the claim. The humans still review — but they review a pre-processed queue instead of a raw inbox.

Time saved: roughly 60% of the manual triage effort.

Internal operations assistants

A mid-size construction firm uses an agent that sits on top of their project management stack. A project manager can ask it natural language questions — "which projects are over budget this quarter", "draft a variation notice for the Smith St job", "summarise last week's site reports for the Monday meeting" — and the agent queries their systems, assembles the answer, and formats it. No dashboards to learn. No reports to pull. This is the kind of enterprise system integration that used to take months of custom dashboard development — now it takes a well-connected agent.

Customer onboarding automation

A SaaS company had a 14-step manual onboarding process for enterprise customers. Provisioning accounts, configuring integrations, sending welcome sequences, scheduling kick-off calls, creating Slack channels, populating a shared drive. An agentic workflow now handles steps 1 through 11 autonomously. A human picks up at step 12 for the personal welcome call and final review. If you are still running processes like this manually, it might be one of the signs your business needs custom software.

Code review and quality gates

This one is close to home. We use AI agents internally to review merge requests, run security checks, validate that code follows project conventions, and draft release notes. It does not replace a senior developer's review — but it catches the mechanical stuff before the human ever looks, which means the human review is faster and more focused on design and logic.

What makes an agent fail

Not everything works. We have also seen agents fail, and the failure modes are instructive.

Vague goals. If you cannot write a clear specification for what a human should do in a workflow, an agent will not figure it out either. Agents amplify clarity and punish ambiguity.

Too many decisions. Agents work best on processes with clear rules and bounded decisions. The more subjective judgment is required — "use your gut feel", "it depends on the relationship" — the less reliable the agent becomes. You can still use AI for these tasks, but as a copilot, not an autonomous agent.

No feedback loop. An agent that does something wrong and nobody notices will keep doing it wrong. You need monitoring. You need humans reviewing outputs, at least by sampling. The agents that work well in production are the ones embedded in workflows where someone checks.

Integration pain. The unglamorous truth is that most of the effort in building an agent is not the AI part. It is the plumbing — getting access to the right APIs, handling authentication, dealing with rate limits, mapping data between systems that were never designed to talk to each other. If your business runs on systems with poor API coverage, agents become harder to deploy.

How to think about this for your business

If you are a business owner or a decision-maker wondering whether agentic AI is relevant to you, here is a simple framework:

-

List your repetitive multi-step processes. The ones where someone follows roughly the same steps every time, using multiple systems, and the outcome is fairly predictable. These are your candidates.

-

Estimate the volume. Agents shine when the volume justifies the build cost. Processing 10 invoices a month manually is fine. Processing 500 is a bottleneck worth automating.

-

Check your integration surface. Do the systems involved have APIs? Can data flow in and out programmatically? If the answer is mostly yes, an agent can probably be built. If the answer is mostly "we copy-paste from a legacy desktop app", you have a prerequisite problem to solve first — and software modernisation might be the right first step.

-

Start with human-in-the-loop. Do not aim for full autonomy on day one. Build the agent to do the work and present it to a human for approval. As trust builds and edge cases are handled, gradually increase autonomy.

-

Measure ruthlessly. Track time saved, error rates, and employee satisfaction. If the agent is not measurably better than the manual process within a few weeks, re-evaluate.

The landscape is moving fast

Six months ago, building a reliable agent required significant custom engineering. Today, frameworks like LangGraph, CrewAI, and Anthropic's agent SDK have matured enough that the plumbing is more standardised. Cloud providers are shipping agent-native services. The barrier to entry is dropping every quarter.

This does not mean you should wait. The businesses that start now — even with simple, narrow agents — are building institutional knowledge about what works in their specific context. That knowledge compounds. The business that deploys its first agent in 2027 will be two years behind the one that started in 2025. We wrote about a similar dynamic with infrastructure automation using Ansible — the organisations that automated early compounded the benefits over time.

Where we come in

At Nano Solutions, we help businesses in Perth and across Australia design, build, and deploy AI-powered automation — including agentic workflows. We are not reselling a platform. We build custom software that fits your specific systems and processes, backed by secure development practices and cloud infrastructure that scales with your workload.

If you have a process that feels like it should be automated but is too complex for simple scripting, get in touch. That is exactly the kind of problem agents are built for. You can also see examples of the kind of work we do on our projects page.

Petr Cervenka

Petr is the founder and lead developer at Nano Solutions, a Perth-based custom software firm. With over a decade of experience building enterprise platforms for government and private sector clients, he leads delivery of complex projects across Australia.

Connect on LinkedIn